In 2025, the era of single-modality AI is rapidly fading. Multimodal models—systems that can understand and generate across text, images, audio, video, and even code or tabular data simultaneously—are now the dominant force in frontier AI. Models like GPT-4o, Gemini 1.5/2.0, Claude 3.5/4, Grok-4, Llama 4, and open-source leaders such as Qwen2-VL, Phi-3.5-Vision, and Pixtral 12B have made multimodal capabilities table stakes rather than a luxury.

This article explains what multimodal AI really means in practice, why it’s transforming software development, the most impactful models available today, and how developers can start building with them right now.

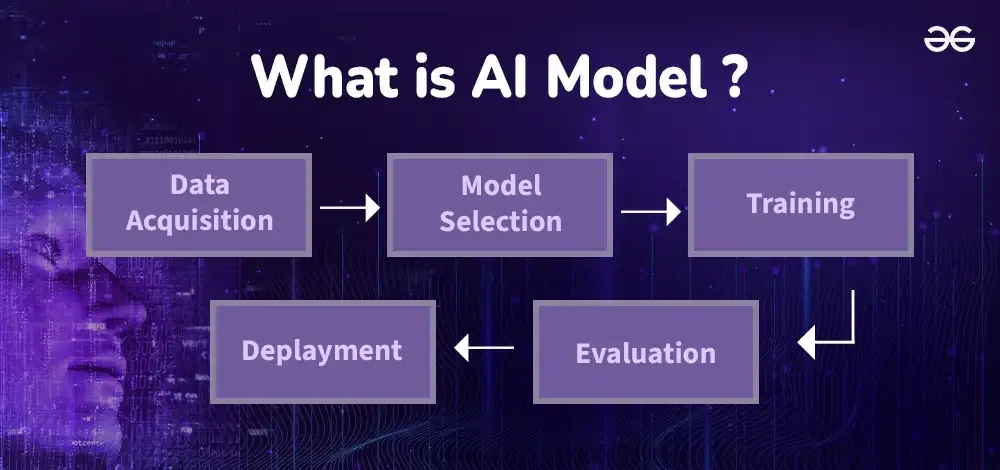

What Makes a Model “Multimodal” in 2025?

A true multimodal model can:

- Accept multiple input types at once (text + image + audio + video)

- Reason across modalities (e.g., “What’s happening in this video and how does it relate to the attached chart?”)

- Generate outputs in multiple formats (text, images, structured data, even short videos or audio)

- Maintain coherent context across modalities (e.g., refer to an image in a long conversation)

Unlike earlier vision-language models that could only handle one image at a time, 2025 models routinely process dozens of images, hours of video, or long audio transcripts alongside text.

The Most Powerful Multimodal Models in December 2025

| Model | Provider | Context Window | Vision | Audio | Video | Open Weights | Best For (2025) |

|---|---|---|---|---|---|---|---|

| GPT-4o / GPT-4o-mini | OpenAI | 128K | Yes | Yes | Yes | No | General-purpose, fast & reliable |

| Claude 4 Sonnet/Opus | Anthropic | 200K–500K | Yes | No | Partial | No | Long documents + images, reasoning |

| Gemini 2.0 Flash/Pro | 1M–2M | Yes | Yes | Yes | No | Massive context, video understanding | |

| Grok-4 | xAI | 128K | Yes | Yes | Yes | Partial | Real-time knowledge, technical tasks |

| Llama 4 (405B) | Meta | 128K | Yes | Yes | Partial | Yes | Open-source leader, fine-tuning |

| Qwen2-VL-72B | Alibaba | 128K | Yes | No | Yes | Yes | Excellent document + image understanding |

| Pixtral 12B | Mistral | 128K | Yes | No | No | Yes | Fast, lightweight multimodal |

| Phi-3.5-Vision | Microsoft | 128K | Yes | No | No | Yes | On-device multimodal inference |

Real-World Use Cases for Developers in 2025

1. Intelligent Document Processing

- Extract tables, charts, and handwritten notes from PDFs, scanned invoices, or contracts

- Example: Feed a 200-page research paper + figures into Claude 4 or Gemini 2.0 and get a structured summary with citations

2. Visual Question Answering & Reasoning

- “What’s wrong with this UI screenshot?” or “Compare these two product photos and suggest improvements”

- Developers are building internal tools that let PMs, designers, and engineers ask questions about screenshots or mockups

3. Code Generation with Visual Context

- Upload a screenshot of a dashboard or wireframe and ask the model to generate the corresponding React/Vue code

- Tools like Cursor, GitHub Copilot, and Claude Artifacts now support this natively

4. Video Understanding & Summarization

- Automatically generate timestamps, chapter titles, and key takeaways from YouTube tutorials or internal training videos

- Gemini 2.0 and GPT-4o can process hour-long videos and answer questions about specific moments

5. Multimodal RAG (Retrieval-Augmented Generation)

- Search across documents, images, slides, and videos simultaneously

- Example: “Find all slides that mention our Q3 revenue growth and include the charts”

6. Accessibility & Customer Support

- Real-time image description for visually impaired users

- Support agents can upload customer screenshots and get instant troubleshooting steps

How Developers Can Start Building with Multimodal Models Today

Option 1: Use Hosted APIs (Fastest)

- OpenAI GPT-4o: gpt-4o and gpt-4o-mini

- Anthropic Claude 4: claude-4-sonnet-2025 or claude-4-opus

- Google Gemini 2.0: Vertex AI or Gemini API

- xAI Grok-4: xAI API

Option 2: Run Open-Source Models Locally or on Cloud GPUs

- Llama 4, Qwen2-VL, Pixtral — Use Ollama, LM Studio, or Hugging Face Transformers

- Recommended hardware: RTX 4090/5090 or cloud GPUs (RunPod, Vast.ai, Lambda Labs)

- Tools like ComfyUI + vision nodes or vLLM make multimodal inference easy

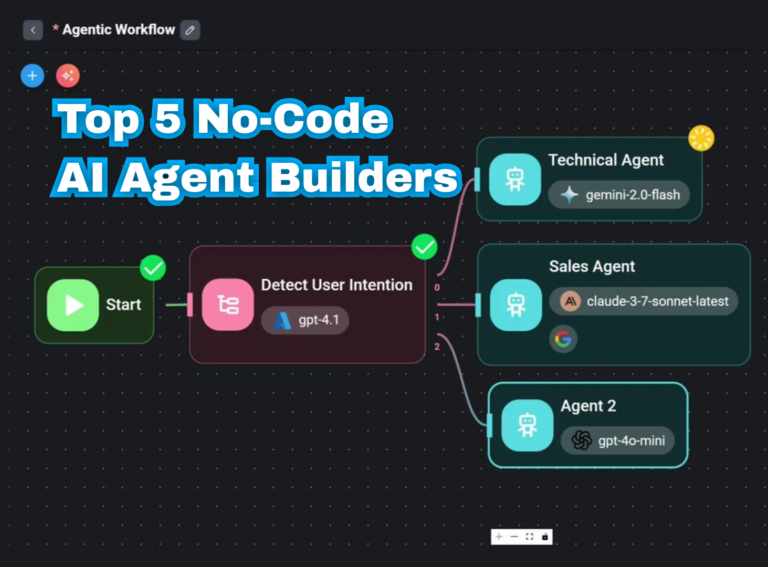

Option 3: No-Code/Low-Code Platforms

- LangChain, LlamaIndex, and Flowise now support multimodal chains

- Relevance AI and Lindy let you build agents that process images and documents without code

Challenges & Best Practices

- Cost — Multimodal inputs (especially video) are expensive on paid APIs

- Latency — Processing images or video adds seconds to response time

- Hallucinations — Models can still misinterpret visual content; always verify critical outputs

- Privacy — Avoid sending sensitive images to third-party APIs; prefer local/open-source models

The Future: What’s Coming in 2026

- Even larger context windows (2M–10M tokens)

- Native video generation and editing

- Real-time multimodal interaction (live video + voice)

- Better on-device multimodal models for privacy and speed

Final Thoughts

Multimodal AI is no longer a “nice-to-have” feature—it’s fundamentally changing how developers build applications. Whether you’re creating internal tools, customer-facing products, or developer platforms, incorporating vision, audio, and video understanding will soon be expected.

Start experimenting today with a simple project: upload a screenshot or short video to your favorite model and ask it to reason about the content. The results will quickly show why multimodal is the next big leap in AI development.

Which multimodal use case are you most excited to build? Let me know in the comments—I’m happy to share code snippets or architecture ideas!