In 2025, bias in machine learning models is no longer just a theoretical concern—it’s a regulatory, reputational, and legal liability. From discriminatory hiring algorithms and unfair credit scoring to biased medical diagnostics and policing tools, biased AI has already caused real harm and drawn heavy scrutiny from regulators (EU AI Act, U.S. Executive Order on AI, California’s AI regulations, etc.).

The good news: bias can be detected, measured, and significantly reduced with the right techniques and processes. This comprehensive guide explains how to build fairer machine learning models in practice, with actionable steps, tools, and best practices that work in real-world production environments as of late 2025.

Why Bias Happens and Why It Matters

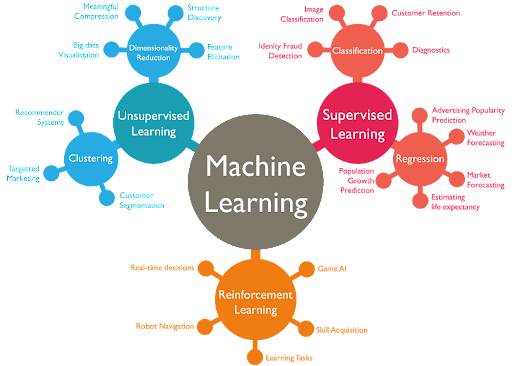

Machine learning models learn patterns from historical data. If that data reflects societal biases—such as under-representation of certain groups, historical discrimination, or skewed labeling—those biases are amplified in the model’s predictions.

Common types of bias in ML:

- Selection bias — Training data not representative of the real-world population

- Label bias — Human annotators introducing subjective judgments

- Proxy bias — Seemingly neutral features (e.g., ZIP code) acting as proxies for protected attributes (race, gender)

- Algorithmic bias — Model architecture or optimization favoring certain outcomes

Consequences in 2025:

- Legal fines (EU AI Act high-risk systems can face up to 6% of global revenue)

- Public backlash and loss of trust

- Poor performance in diverse real-world settings

Step-by-Step: How to Prevent and Mitigate Bias in Machine Learning Models

1. Audit Your Data Early and Often

Bias starts with data.

Best practices:

- Conduct demographic audits: Measure representation of protected attributes (race, gender, age, disability, etc.) across training, validation, and test sets

- Use tools like:

- AIF360 (IBM) – Open-source toolkit with data exploration and bias metrics

- Fairlearn (Microsoft) – Visual dashboards and fairness metrics

- What-If Tool (Google) – Interactive exploration of model behavior across subgroups

- Generate synthetic data to balance underrepresented groups (e.g., using GANs or diffusion models like Stable Diffusion fine-tuned on medical images)

2. Choose Fairness-Aware Data Preprocessing Techniques

Before training, transform the data to reduce bias.

Popular methods:

- Re-weighting — Assign higher weights to underrepresented groups

- Resampling — Oversample minority groups or undersample majority groups

- Counterfactual fairness — Generate synthetic examples where protected attributes are changed (e.g., “what if this applicant were female?”)

- Feature removal or masking — Remove or obfuscate proxies for protected attributes

3. Train with Fairness Constraints

Modern frameworks make it easy to enforce fairness during training.

Techniques:

- In-processing methods:

- Adversarial debiasing (add an adversary that tries to predict protected attributes)

- Constrained optimization (e.g., add fairness penalties to the loss function)

- Post-processing methods:

- Equalized odds or demographic parity thresholds

- Calibrated score adjustment (e.g., Platt scaling per subgroup)

Top libraries in 2025:

- Fairlearn – Microsoft’s library with built-in mitigation algorithms

- AIF360 – Comprehensive toolkit with 10+ mitigation algorithms

- TensorFlow Fairness – Native support in TF 2.15+

- PyTorch Fairness – Community packages like torch-fairness

4. Evaluate with Multiple Fairness Metrics

No single metric is perfect—use a dashboard of several.

Common fairness metrics (2025):

- Demographic Parity – Equal acceptance rates across groups

- Equalized Odds – Equal true positive and false positive rates

- Equal Opportunity – Equal true positive rates

- Disparate Impact – Ratio of acceptance rates (80% rule)

- Calibration – Predictions equally reliable across groups

Recommended tool: Fairlearn’s Fairness Dashboard or AIF360’s metrics module—both generate interactive reports.

5. Implement Continuous Monitoring in Production

Bias can drift over time as data distributions change.

Best practices:

- Set up automated fairness monitoring pipelines (e.g., with Evidently AI or Arize)

- Trigger alerts when subgroup performance diverges

- Retrain models periodically with new, more balanced data

- Maintain audit trails of model versions and fairness metrics

6. Involve Diverse Teams and Domain Experts

Technical solutions alone aren’t enough.

- Include ethicists, sociologists, and representatives from affected communities in design and review

- Conduct red-teaming exercises to probe for edge cases

- Use participatory design methods to gather feedback from end users

Real-World Case Studies (2025)

- Hiring platform — A major tech company reduced gender bias in resume screening by 68% using adversarial debiasing and demographic parity constraints.

- Credit scoring startup — Implemented equalized odds post-processing and saw disparate impact drop from 0.72 to 0.91 (closer to 1.0 = fair).

- Healthcare provider — Used synthetic data augmentation to improve diagnostic accuracy for underrepresented ethnic groups by 22%.

Quick Comparison: Popular Fairness Toolkits (Late 2025)

| Toolkit | Best For | Ease of Use | Metrics | Mitigation Algorithms | Active Community |

|---|---|---|---|---|---|

| Fairlearn | Production monitoring & mitigation | High | 15+ | 8+ | Very strong |

| AIF360 | Research & experimentation | Medium | 20+ | 15+ | Strong |

| What-If Tool | Interactive exploration | High | Basic | None | Google-backed |

| Evidently AI | Monitoring & drift detection | High | 10+ | Limited | Growing |

Final Thoughts

Preventing bias in machine learning is not a one-time checklist—it’s an ongoing process of measurement, mitigation, and monitoring. In 2025, regulators and users expect organizations to demonstrate fairness, not just claim it.

By integrating fairness tools from day one, involving diverse stakeholders, and treating fairness as a core performance metric (alongside accuracy and speed), you can build AI systems that are both powerful and trustworthy.

Start small: Pick one high-impact model in your organization, run a fairness audit, and apply one mitigation technique. The results will likely surprise you—and the risk reduction will be substantial.

What fairness challenges have you encountered in your ML projects? Share your experiences in the comments—I’d love to discuss solutions!