In 2025, generative AI is transforming healthcare at an unprecedented pace. From drafting clinical notes and summarizing patient records to generating synthetic medical images, personalized treatment plans, and even drug discovery hypotheses, tools like Claude, GPT-4o, Llama 3.3, and specialized medical models (Med-PaLM 3, BioGPT, etc.) are being deployed in hospitals, clinics, and pharma companies worldwide.

While the potential benefits are enormous—faster diagnoses, reduced administrative burden, and more equitable care—the ethical risks are equally significant. Poorly implemented generative AI can amplify biases, erode patient trust, violate privacy, and even cause harm. This article outlines the most critical ethical considerations for healthcare organizations and developers implementing generative AI in 2025, along with practical frameworks and best practices.

1. Bias and Fairness in Medical AI

Generative AI models are trained on vast datasets that often reflect historical healthcare disparities.

Key risks:

- Racial, gender, and socioeconomic biases in diagnostic suggestions or treatment recommendations

- Under-representation of certain populations (e.g., rare diseases, non-Western patient profiles) leading to poorer performance

- “Hallucinated” medical facts that disproportionately affect marginalized groups

Real-world example (2025): A major U.S. health system paused its generative AI note-summarization tool after it was found to systematically omit social determinants of health (e.g., housing instability) more often for Black and Hispanic patients, skewing risk scores.

Mitigation strategies:

- Conduct rigorous bias audits using datasets like MIMIC-IV, UK Biobank, and diverse synthetic cohorts

- Use techniques such as debiasing prompts, counterfactual data augmentation, and fairness-aware fine-tuning

- Require transparency reports showing performance across demographic subgroups before deployment

2. Patient Privacy and Data Protection

Generative AI often requires access to sensitive electronic health records (EHRs), imaging, and genomic data.

Key risks:

- Re-identification risk even with de-identified data (especially with large context windows)

- Accidental leakage of PHI through model outputs or third-party APIs

- Cross-border data flows violating GDPR, HIPAA, or emerging global AI regulations

Best practices in 2025:

- Prefer local or on-premises deployments of open-source models (e.g., Llama 3.3 70B fine-tuned on private data)

- Implement differential privacy during fine-tuning

- Use secure multi-party computation or federated learning for collaborative training without sharing raw data

- Require explicit patient consent for any generative AI use in their care pathway

3. Transparency, Explainability, and Informed Consent

Patients and clinicians need to understand when and how AI is involved in their care.

Key challenges:

- Generative models are often black boxes; even “chain-of-thought” explanations can be inconsistent

- Clinicians may over-rely on AI suggestions (automation bias)

- Patients may not realize AI generated parts of their discharge instructions or radiology reports

Recommended approaches:

- Always disclose AI involvement (e.g., “This summary was generated with assistance from an AI tool and reviewed by Dr. Smith”)

- Provide human-readable explanations alongside outputs (e.g., “This diagnosis suggestion is based on 3 similar cases from 2023–2025”)

- Implement “human-in-the-loop” workflows for high-stakes decisions (diagnosis, treatment planning, informed consent)

4. Accountability and Liability

When generative AI makes a mistake, who is responsible?

Current landscape (2025):

- Most jurisdictions still place liability on the clinician or healthcare organization, not the AI vendor

- Some states (California, New York) have introduced “AI liability” laws requiring clear documentation of model provenance and audit trails

Practical steps:

- Maintain detailed audit logs of every AI interaction (prompts, model version, outputs, human edits)

- Establish clear governance committees that review AI use cases quarterly

- Include indemnity clauses in vendor contracts for enterprise-grade models

5. Misinformation and Hallucination in Clinical Contexts

Generative AI can confidently produce plausible but incorrect medical information.

Examples of harm:

- Fabricated drug interactions in treatment plans

- Incorrect summaries of patient history leading to wrong medication orders

- AI-generated radiology reports missing subtle findings

Mitigation techniques:

- Use retrieval-augmented generation (RAG) with trusted medical knowledge bases (UpToDate, PubMed, clinical guidelines)

- Implement guardrails that force the model to cite sources or say “I don’t know” when confidence is low

- Require double-review for any AI-generated clinical documentation

6. Equity and Access

Generative AI can widen healthcare disparities if only well-funded institutions adopt it.

Considerations:

- Smaller rural hospitals may lack the infrastructure or expertise to deploy safe AI

- Low-resource settings in developing countries risk being left behind

Solutions:

- Support open-source medical models (e.g., Meditron, BioMedLM) that can run on modest hardware

- Advocate for public-private partnerships to subsidize AI infrastructure in underserved areas

- Participate in initiatives like the WHO’s AI for Health governance framework

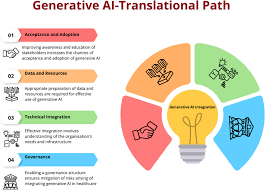

Ethical Frameworks and Guidelines in 2025

Several authoritative resources guide responsible implementation:

- HHS AI in Healthcare Blueprint (U.S.)

- EU AI Act High-Risk Classification for medical AI

- AMA Principles for AI in Medicine

- WHO Ethics and Governance of AI for Health

- Partnership on AI’s Healthcare Working Group

Most leading health systems now require any generative AI project to complete an ethics review using one of these frameworks.

Final Thoughts

Generative AI has the potential to make healthcare more efficient, accurate, and accessible—but only if deployed responsibly. In 2025, the organizations that succeed will be those that treat ethical considerations not as a compliance checkbox, but as a core part of their AI strategy.

By prioritizing bias mitigation, privacy, transparency, accountability, and equity, healthcare leaders can harness generative AI’s power while protecting patients and maintaining public trust.

If your organization is exploring generative AI in healthcare, start with a small, well-governed pilot and an independent ethics review. The lessons learned will be invaluable as the technology continues to evolve.

What ethical challenges have you encountered when implementing AI in healthcare? Share your experiences in the comments—I’d love to hear real-world insights!